Introduction

In February 2026, ByteDance launched Seedance 2.0, marking the transition of AI video generation into a productive era. This tool, hailed as a game-changer, addresses the pain points of audio-visual disconnection with its dual-branch diffusion transformer architecture, supporting 8K resolution output and multimodal mixed input, making creation more controllable and efficient. This article reveals how Seedance 2.0 reshapes the video creation ecosystem through a week of in-depth testing.

If the emergence of Sora in 2024 was akin to dropping a nuclear bomb, the limited internal testing of Seedance 2.0 in early February 2026 signifies that AIGC has officially moved from a flashy stage to a productive era.

As a professional engaged in AI content creation for three years, I have witnessed the evolution of AI video generation from rudimentary to nearly indistinguishable from reality. From early issues like body distortion and audio-visual mismatches to today’s cinematic quality, each technological iteration has been a pleasant surprise.

The producer of “Black Myth: Wukong,” Feng Ji, even remarked, “The childhood of AIGC has ended.”

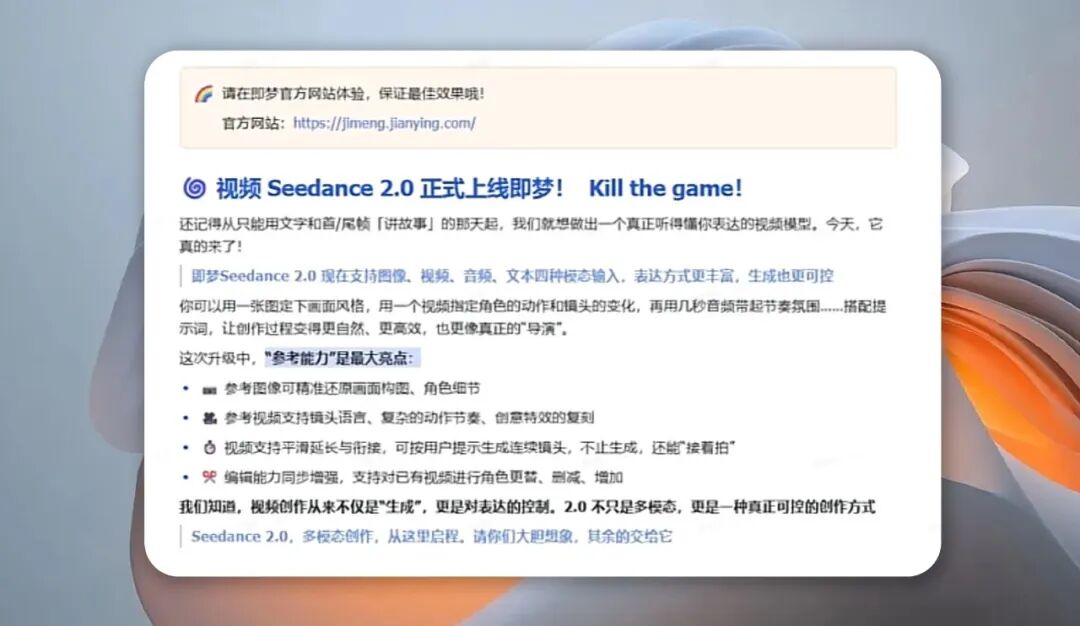

Seedance 2.0 is referred to as a game-changer in AI video, claiming to elevate AI video from a toy-level demonstration to scalable and profitable industrial productivity.

With anticipation and scrutiny, I spent a week deeply testing its features, from basic functionalities to professional scenarios. Today, I will share my first-hand experience of this highly anticipated AI video model.

Testing Criteria

Before diving into the evaluation, let me outline my core testing dimensions: the practical effects of the technical architecture, the controllability of multimodal input, the quality and coherence of generated videos, adaptability to different scenarios, and the ease of use and cost-effectiveness that ordinary users care about most.

For most creators, no matter how advanced the technology, if it cannot be applied or is difficult to use, it remains a castle in the air. Seedance 2.0’s first impression is that it breaks the barrier between professionalism and ease of use, showcasing hard power for tech enthusiasts while allowing novices to quickly produce videos.

Technical Breakthroughs

Let’s start with the core technical breakthrough: the dual-branch diffusion transformer architecture. To be honest, when I first encountered this technical term, I found it somewhat dry.

However, after hands-on experience, I realized that this technology effectively addresses the biggest pain point that has long plagued AI video: audio-visual disconnection.

Traditional AI video generation typically generates visuals first and then matches audio, often leading to mismatches in lip-syncing, footsteps, and background music rhythm. Even manual adjustments in post-production rarely achieve perfect synchronization.

Seedance 2.0’s dual-branch architecture allows visual and audio elements to grow in sync. One branch generates visual details, movements, lighting, and camera work, while the other simultaneously models voice, sound effects, and background music, ensuring real-time interaction and consistency between audio and visuals.

I conducted a simple test: I input the text “A girl playing guitar and singing in a folk style, with the camera slowly zooming in from a long shot to a close-up of her hands, accompanied by the original sound of guitar playing and slight ambient sound,” while uploading a reference image of a girl playing guitar and a piece of folk guitar audio.

The generation took about 2 minutes and 30 seconds, and the result was impressive: the girl’s finger movements were precise and perfectly synchronized with the guitar audio’s rhythm, with lip movements matching the lyrics almost at a human level. The zoom-in process was smooth and natural, without any stuttering or blurriness, and the ambient sound was well-balanced, not overpowering the vocals and guitar.

Even more surprising was the visual detail; the light and shadow changes fit the scene, with clear details of the guitar strings and wood grain, and even the subtle movements of the girl’s fingertips were accurately reproduced, which was hard to achieve in previous AI video models.

In addition to audio-visual synchronization, Seedance 2.0’s four-modal mixed input feature maximizes creative control. It supports simultaneous input of text, images, videos, and audio, allowing the upload of up to 9 images, 3 video clips, and 3 audio segments, along with a dedicated @mention system that enables precise control over the use of each reference material, completely eliminating the awkwardness of relying on AI to guess input prompts.

As a creator who often produces narrative shorts, my biggest headache has been character consistency and camera coherence, which Seedance 2.0’s character anchoring technology perfectly resolves.

Currently, while the official model parameters have not been disclosed, some core parameter details are clear based on industry breakdowns and my practical tests: Seedance 2.0’s training data includes over 1 billion video clips and 500 million audio materials, incorporating a wealth of cinematic and commercial-grade content, with a training duration exceeding 6 months, which underpins its high-quality generation capabilities.

In terms of output parameters, it supports a maximum resolution of 8K, with frame rates adjustable between 24fps, 30fps, and 60fps, with a default generation frame rate of 30fps, perfectly matching the needs of mainstream short videos and film previews; the maximum bitrate reaches 10Mbps, ensuring clarity and detail in visuals, even when zooming in on details, avoiding blurriness or jagged edges.

Seedance 2.0’s 8K output capability also meets the broadcasting needs of top-tier artistic events like the Spring Festival Gala, confirming its industrial-grade strength—after all, programs like “Song of the Wind” and “God of Flowers” at the 2026 CCTV Spring Festival Gala utilized its visual creation capabilities, stunning audiences nationwide.

Limitations

Of course, no product is perfect, and Seedance 2.0 has some shortcomings that I genuinely felt during testing.

Firstly, there is a duration limitation; the standard generation duration is between 5 to 15 seconds. While it supports video extension functionality, the extended video experiences a slight decline in temporal coherence, which still poses limitations for users needing to create longer videos.

In terms of handling complex physical details, there is room for improvement. For instance, when I tested generating a scene with splashing water, the water flow appeared natural, but the details were not as refined as real-life filming, and the fabric’s wrinkles exhibited slight unnaturalness during rapid movements.

Additionally, cost-effectiveness is a point ordinary users need to consider. The basic membership for Seedance 2.0 is 69 yuan per month, and with a pay-per-generation model, each 10-second video costs about $3, which may not be low for occasional creators. However, for professional creators and businesses, it still offers high cost-effectiveness compared to manual shooting and editing costs.

Moreover, besides Ji Meng, the new model can also be used in Doubao, Jianying, and Xiaoyun.

After all, generating a 15-second professional video only takes about 3 minutes, while manual shooting and editing may require several hours or even longer.

Ease of Use

Lastly, let’s discuss the ease of use.

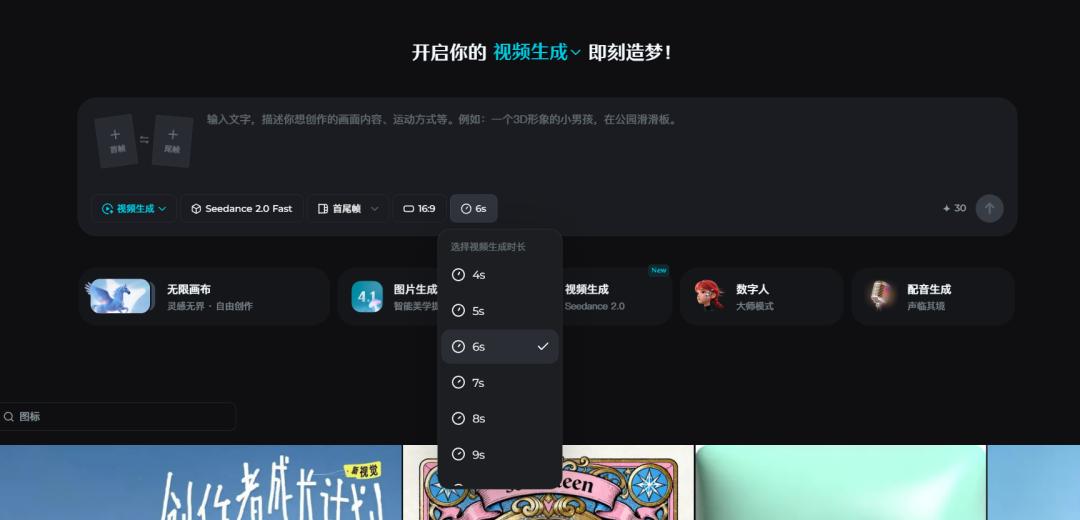

As someone who frequently uses AI creation tools, I found Seedance 2.0 incredibly easy to navigate. The interface is simple and intuitive, with core functions clearly visible. Even users with no video creation experience can complete a video generation in about 3 minutes by following the prompts.

Its Chinese support is very user-friendly, allowing natural language prompts to accurately trigger needs without the need for complex coding or technical jargon. Coupled with the @mention system, users can precisely control creative details, truly achieving “what you think is what you get.”

Conclusion

After a week of testing, Seedance 2.0 left me pleasantly surprised and practical. It is no longer just an AI tool for “playing around” but a genuinely applicable productivity tool that combines leading technical strength with user-friendliness, catering to diverse needs from personal novices to professional practitioners, and from self-media creation to industrial-grade production.

Its emergence indeed propels the progress of the AI video generation industry, enabling more people to easily realize their creative ideas.

If you are a self-media creator or an e-commerce merchant needing to quickly generate high-quality short videos or marketing videos, Seedance 2.0 is definitely worth a try. If you are a film industry practitioner needing to quickly produce storyboard previews or concept designs, it can also become your reliable assistant.

However, if you need to create long videos or have very high demands for physical details, you may still need to pair it with other tools for post-optimization.

I also look forward to Seedance 2.0 breaking through duration limitations, enhancing the handling of complex physical details, and reducing usage costs, allowing more creators to enjoy the convenience brought by AI technology. After all, the core of AI creation has never been to replace humans but to help them unleash creativity and improve efficiency, and Seedance 2.0 is steadily moving in that direction.

Comments

Discussion is powered by Giscus (GitHub Discussions). Add

repo,repoID,category, andcategoryIDunder[params.comments.giscus]inhugo.tomlusing the values from the Giscus setup tool.