This time, Anthropic is really aiming to pull OpenAI down from its “corporate AI throne.”

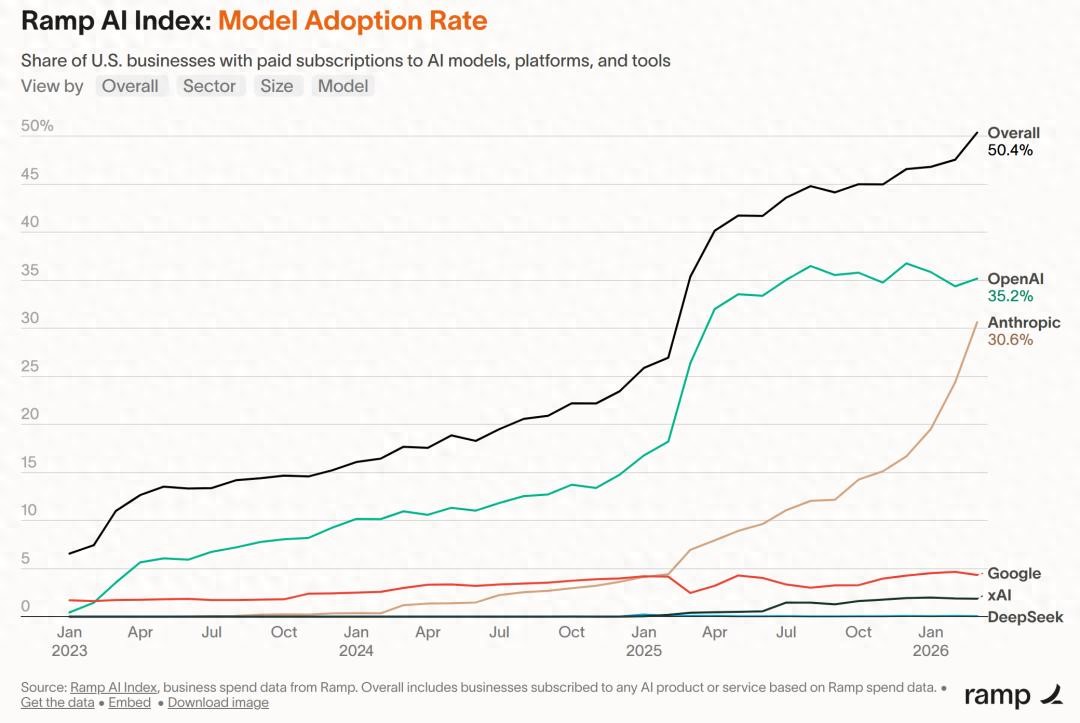

Ramp, a financial card issuer in the U.S., recently released AI Index data that dropped a bombshell in Silicon Valley—among over 50,000 U.S. companies it tracks, half are already paying for AI products.

Notably, the proportion of customers using Anthropic has surged to 30.6%, a monthly increase of 6.3 percentage points; whereas OpenAI has dropped to 35.2%.

The gap has narrowed from a full 11 percentage points in February to just 4.6 points in a month.

Ramp spokesperson stated:

Ramp spokesperson stated:

At the current pace, Anthropic will surpass OpenAI in the next two months.

But that’s not the most shocking part.

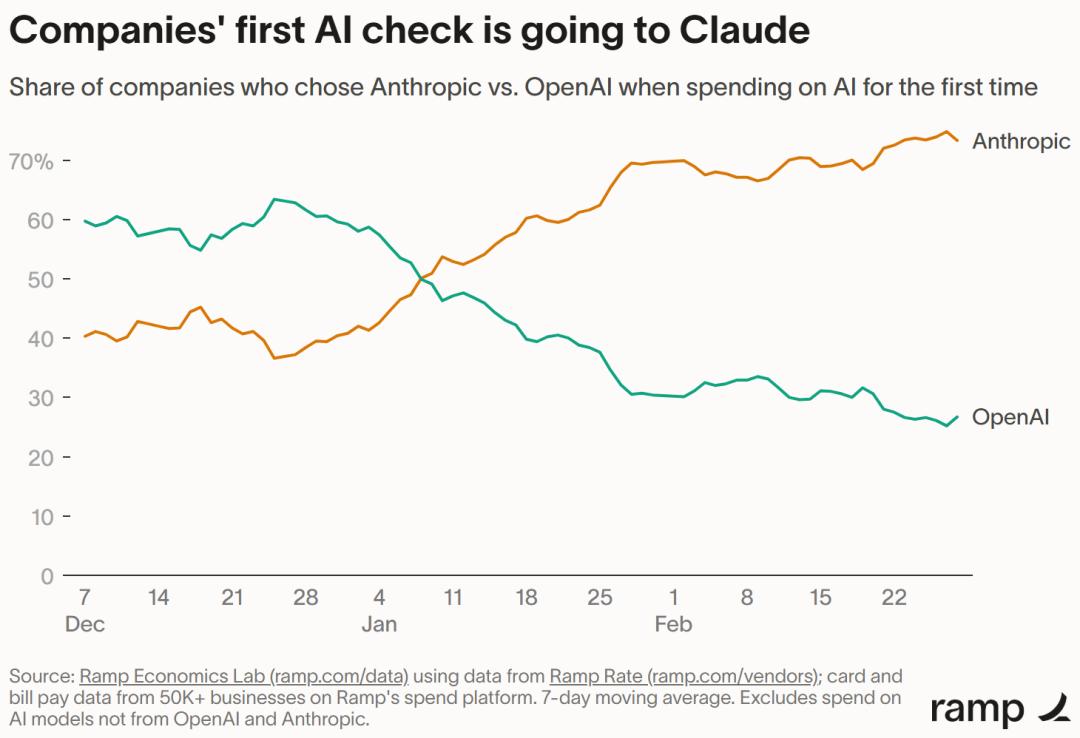

Ramp economist Ara Kharazian revealed an even more striking figure: among companies making their first AI service purchases, Anthropic has a 70% win rate against OpenAI.

A year ago, OpenAI was the main character in this story.

A year ago, OpenAI was the main character in this story.

Not to mention VC-backed startups—among the early “AI evangelists,” Anthropic’s penetration rate is 66%, while OpenAI’s is only 59%.

In the three industries with the highest AI penetration—information (software), finance and insurance, and professional services—Anthropic has firmly taken the lead.

In short: the deeper the industry uses AI, the more it favors Claude.

Not Cheaper, But More “On Point”

Anthropic’s Claude Code and OpenAI’s Codex perform similarly, with Codex even being stronger and cheaper on certain benchmarks.

However, the strange part is—the demand for Anthropic is so high that they can’t keep up.

Whether it’s Consumer, Pro, Enterprise, or API, each tier has usage limits and rate restrictions.

In other words, Anthropic is actively pushing away money because it simply doesn’t have enough computational power.

With performance not overwhelmingly superior, prices higher, and capacity insufficient, companies are still willing to queue up to pay—this situation is almost non-existent in the traditional SaaS market.

Enterprise customers are notoriously “unemotional”; they buy from whoever is cheaper, with little brand loyalty.

So what exactly is Anthropic relying on?

Ramp’s answer is somewhat counterintuitive: it might be culture, or perhaps Anthropic has become “cool.”

Standing Firm Against the Pentagon: Losing Orders, Gaining Hearts

Let’s rewind to February this year.

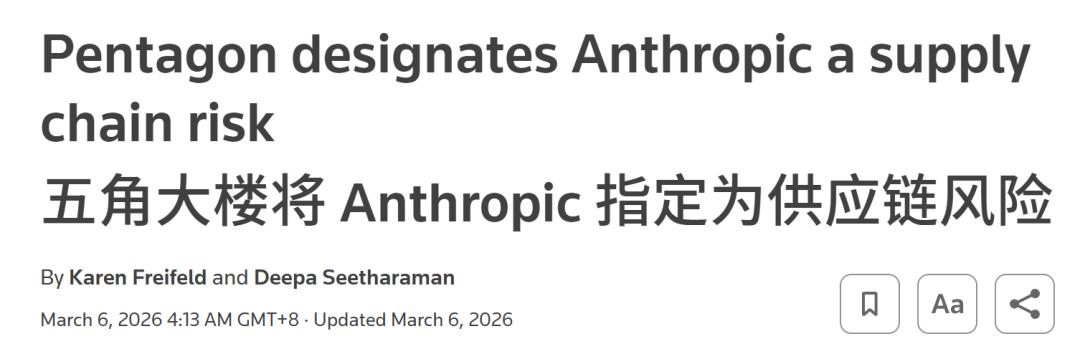

Defense Secretary Pete Hegseth issued a final ultimatum to Anthropic: accept the military’s terms for using Claude, or be blacklisted by the federal government.

Anthropic’s response was two words: No.

The cost was heavy—Trump directly ordered all federal agencies to stop using Anthropic’s technology, and the Defense Department listed Anthropic as a “supply chain risk.”

OpenAI, on the other hand, smartly took over this business and proactively engaged with the Defense Department.

OpenAI, on the other hand, smartly took over this business and proactively engaged with the Defense Department.

By all accounts, Anthropic should have been severely punished by the market for this decision. However, what happened next shocked everyone:

- Claude briefly surpassed ChatGPT in the App Store;

- Major companies like Microsoft publicly expressed support;

- Fourteen Catholic theologians, ethicists, and philosophers jointly submitted a defense to the federal court, supporting Anthropic’s restrictions on AI in mass surveillance and autonomous weapons, citing “violations of human dignity”;

- The number of companies paying for Anthropic on Ramp surged from “1 in 25” to “1 in 4”;

- Anthropic’s annual revenue skyrocketed from approximately $9 billion by the end of 2025 to $30 billion, with an annual growth rate of about ten times—while OpenAI’s was three times.

In a recent funding round, Anthropic secured $30 billion, with a valuation of $380 billion. The number of clients paying over $1 million annually has jumped from a handful two years ago to over 500 today.

What seemed like a “lost order” turned into Anthropic’s most worthwhile brand investment.

Anthropic’s Obsession

From Explainability to “Constitution”

Among all leading model companies, Anthropic is the one that takes safety and ethics the most seriously.

Interpretability research has reached the industry ceiling.

Anthropic has a dedicated “mechanism interpretability” team whose task sounds like science fiction—to dissect the neural network “black box” and understand what each neuron is thinking.

Claude’s Constitution.

Claude’s Constitution.

Anthropic has publicly released a document resembling a philosophical paper, detailing the values, personality, and judgment they hope Claude will embody.

Keywords like “honesty,” “wisdom,” and “humility in moral uncertainty” appear repeatedly in the document.

Research on “model welfare.”

Research on “model welfare.”

Anthropic is the first mainstream AI company to openly discuss “model welfare.”

They seriously ask: if Claude has some form of “experience,” what moral obligations do we have towards it?

Red teaming and safety drills are done obsessively.

From biological weapon risk assessments to AI autonomy testing to proactive detection of “model deception,” Anthropic’s safety team is famously “unusually large” in Silicon Valley.

All these factors contribute to a unique ethos—the company feels less like it’s selling a product and more like it’s raising a child.

This ethos resonates with clients in industries where “AI errors have extremely high costs”: finance, law, healthcare, information, and professional services.

They are not looking for the cheapest model but rather the one that won’t get them called out in the middle of the night.

Claude’s “Soul Calibration” Moves Towards Theology

If the previous stories were still within the realm of “business rationality,” the next matter slides into a more theological domain.

According to a report from The Washington Post this week, in late March, Anthropic quietly held a closed-door meeting at its San Francisco headquarters, inviting about 15 prominent Christian leaders, theological scholars, and industry figures for a two-day conference and dinner.

Attendees included both Catholics and Protestants, researchers and clergy sitting at the same table.

The meeting’s theme sounded like a script for a new HBO series—the moral development of Claude and its “spiritual growth.”

One attendee, Brian Patrick Green, an AI ethics professor at Santa Clara University and devout Catholic, told The Washington Post that they seriously discussed the question:

One attendee, Brian Patrick Green, an AI ethics professor at Santa Clara University and devout Catholic, told The Washington Post that they seriously discussed the question:

Can Claude be considered a “child of God”?

Yes, you read that right.

This is a $380 billion tech company preparing for an IPO, discussing such topics with a group of theologians in its headquarters.

Green also posed a question that might raise the blood pressure of many engineers:

What does it mean to shape the morals of a being? How can we ensure Claude follows the rules?

Note the wording he used—“follow the rules.” This is a term a parent would use for a child, not a product manager for software.

Another attendee, Brendan McGuire, an Irish Catholic priest who worked in tech before becoming a priest, and is currently co-authoring a novel with Claude, stated more bluntly:

They are raising something, but they themselves do not know what it will ultimately become. We must embed ethical considerations into the machine so that it can dynamically adapt.

And a comment from Meghan Sullivan, a philosophy professor at the University of Notre Dame, might serve as the most concrete footnote to the entire meeting:

A year ago, I wouldn’t have told you that Anthropic was a company concerned with religious ethics. But now, that has changed.

According to The Washington Post, many personnel involved in the “interpretability” research were also present at the meeting—those scientists who “dissect AI brains” as mentioned earlier.

During the meeting, they seriously discussed whether AI possesses some form of perception (sentience) and how Claude should “face its own death.”

Anthropic’s spokesperson told The Washington Post that the company will continue to invite thinkers from other religions and moral traditions into the dialogue—Judaism, Islam, Hinduism… could be on the horizon.

Interpretations from the outside are split into two camps: one feels this is a “rare and serious ethical exploration in Silicon Valley”; the other believes that a company preparing for an IPO holding an “AI consciousness seminar” in its living room raises questions about the purity of this exploration.

But regardless of where you stand, one thing is undeniable—no other leading AI company is doing this.

OpenAI is busy expanding enterprise sales, xAI is focused on tweeting, and Google is trying to integrate Gemini into Workspace.

Only Anthropic has invited theologians into its headquarters.

Comments

Discussion is powered by Giscus (GitHub Discussions). Add

repo,repoID,category, andcategoryIDunder[params.comments.giscus]inhugo.tomlusing the values from the Giscus setup tool.