Claude’s Major Outage

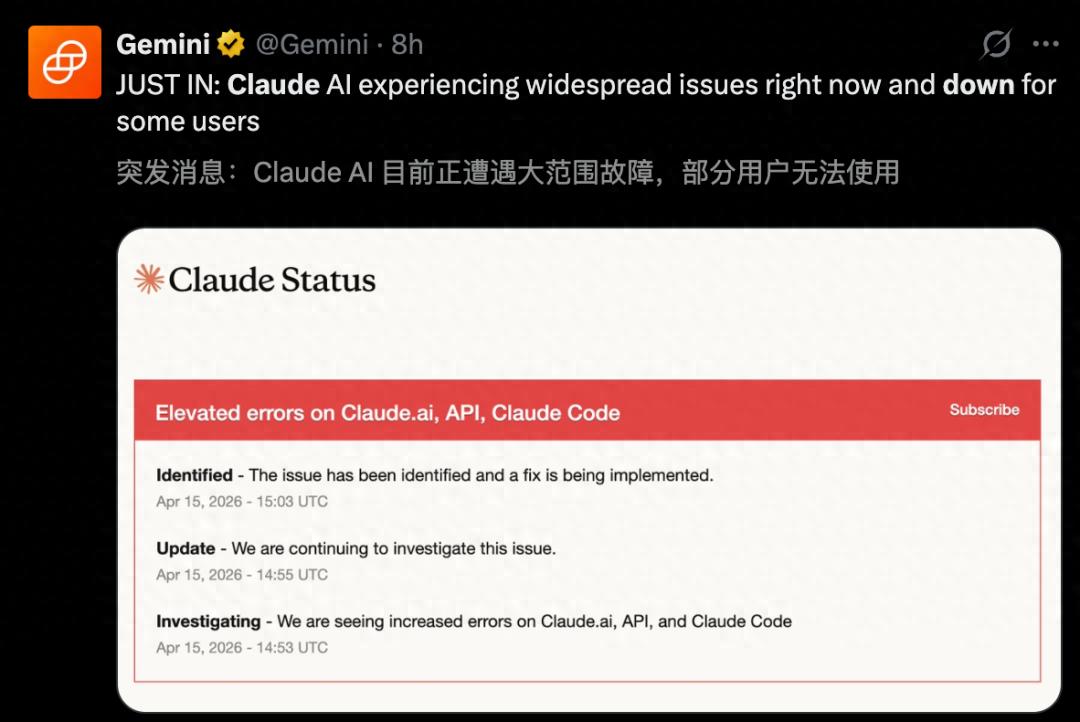

Claude has faced yet another significant outage, marking the seventh major failure in just two weeks, causing distress among developers. The outage lasted for three hours, during which many users were unable to access the service.

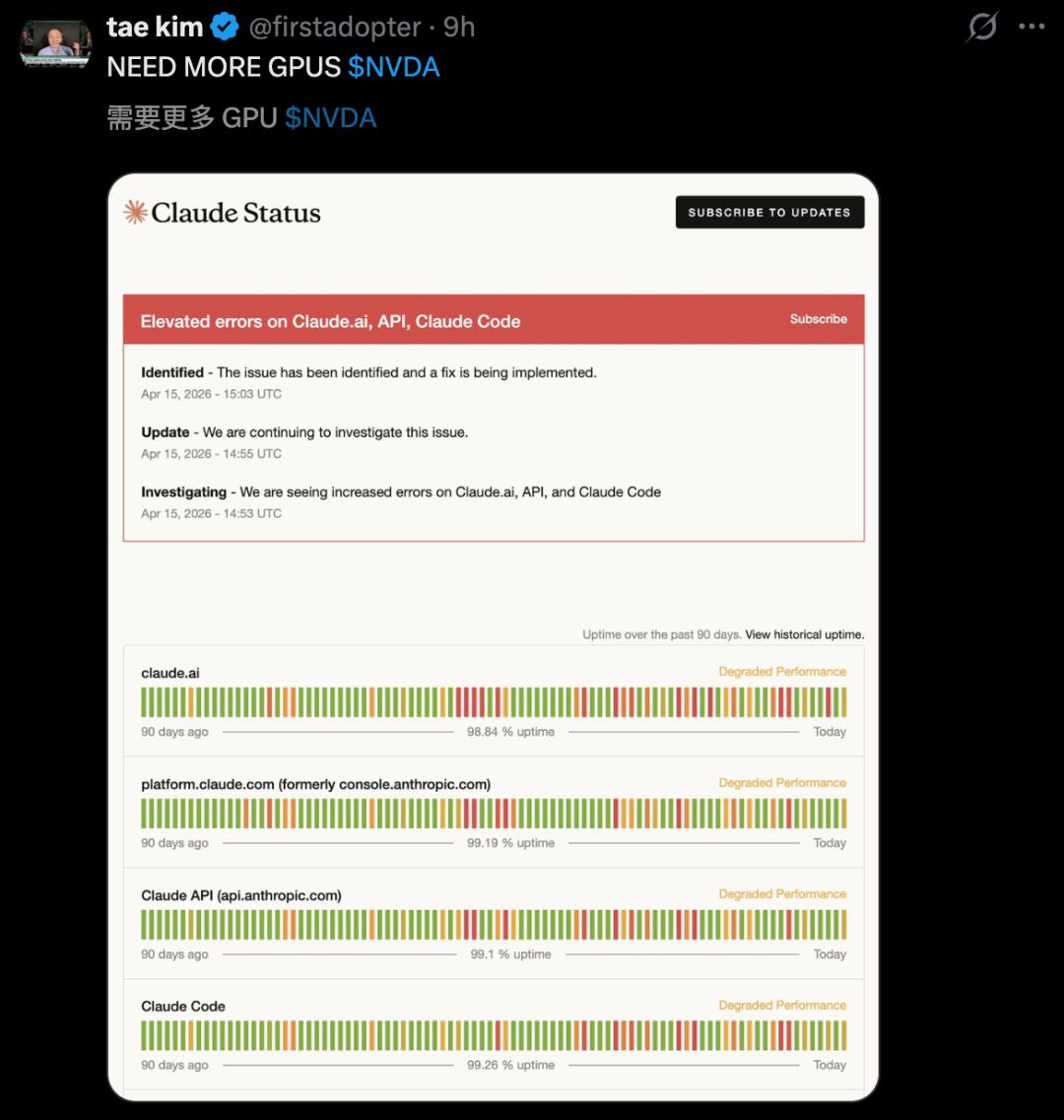

On Wednesday morning, Eastern Time, Anthropic encountered a severe system crisis, with their official status page indicating high error rates across Claude, Claude Code, and API interfaces.

During the peak of the outage, 6,000 users reported issues on Downdetector.

This situation reflects a significant oversight by Anthropic regarding their computational power reserves, as highlighted in an internal memo from OpenAI.

In response to the ongoing issues, Anthropic has announced plans to develop their own chips to address the computational power gap.

Timeline of the Outage

The outage was a sudden shock for many users, described as a “productivity strike.” According to Downdetector, the failure peaked around 10:42 AM, with 6,000 reports submitted.

- 10:53 AM: Anthropic began investigating the cause of the errors.

- 12:30 PM: The login success rate for Claude stabilized, and the team worked to resolve remaining issues.

- 01:50 PM: The status page was updated, confirming that all systems had returned to normal operation.

Despite the outage lasting nearly three hours, it significantly disrupted users who relied on Claude for coding and work tasks.

Some users lamented, “My personal projects disappeared in an instant.”

In fact, some developers are considering switching to OpenAI Codex due to these repeated outages.

Frequency of Outages

Since April, this marks the seventh outage for Anthropic. A review of the status page shows a troubling frequency of service interruptions:

- April 1: Opus 4.6 and Sonnet 4.6 timeout rates were abnormal.

- April 3: Claude Code was down for 1 hour and 10 minutes.

- April 6 & 7: System crashes affected voice mode and normal conversations for two consecutive days.

- April 10: Non-Opus models collectively failed.

- April 13: Claude.ai was down for 15 minutes.

- April 15: The three-hour outage occurred this Wednesday.

In just over two weeks, there have been seven documented service interruptions, indicating a systemic issue rather than isolated incidents.

Anthropic typically attributes these events to unprecedented demand following major releases, suggesting that the number of users has overwhelmed their servers.

Plans for Chip Development

In light of these challenges, Reuters reported that Anthropic is planning to develop its own chips.

The project is still in its early stages, with no specific design plans or dedicated teams established yet. Industry estimates suggest that designing an advanced AI chip could cost around $500 million, covering salaries for top engineers, testing, and ensuring zero defects in manufacturing.

$500 million is just the entry fee.

Typically, the timeline from design to mass production can take 3 to 4 years, with any misstep potentially jeopardizing initial investments.

For example, Google’s TPU took five years from inception in 2013 to its first internal deployment in 2015, and it wasn’t until 2018 that the third generation had scalable training capabilities.

Thus, Anthropic may ultimately continue purchasing chips rather than designing their own. However, the mere act of exploring this option sends a significant signal.

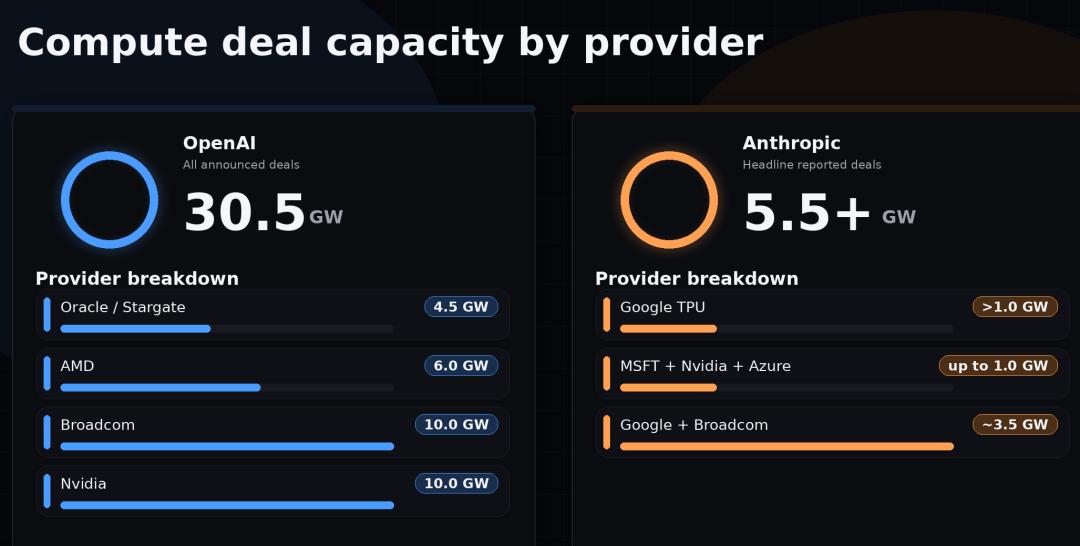

Currently, Anthropic uses various new chips to develop Claude, including NVIDIA GPUs, Google TPUs, and Amazon chips. Recently, they also announced a new collaboration with Google and Broadcom to create a 3.5GW supercomputing cluster.

AI Giants Moving Away from NVIDIA

Anthropic is not alone in this endeavor. Meta’s MTIA chip is collaborating with Broadcom for expanded production, aiming for “multi-GW” XPU power starting in 2027. Last October, OpenAI announced a partnership with Broadcom, targeting deployment by late 2026 and a cumulative 10GW of power by 2029.

Why are these AI giants gravitating towards Broadcom? The core differences between custom ASICs and general-purpose NVIDIA GPUs lie in two numbers:

- ASICs optimized for specific model architectures have a Total Cost of Ownership (TCO) that is 30% to 50% lower than general-purpose GPUs.

- Performance per watt is an order of magnitude higher than general-purpose GPUs.

While this sounds like a significant advantage, ASICs have their drawbacks. They are tied to specific model architectures, meaning if the model changes, the hardware may not be as efficient. They also lack a mature ecosystem like CUDA, which is still necessary for research and experimental scenarios.

Thus, Anthropic has clarified that Claude is currently deployed across AWS Trainium, Google TPU, and NVIDIA GPUs, without relying solely on any single provider.

This multi-cloud, multi-chip strategy acknowledges that no single supplier can fully satisfy the needs of cutting-edge AI companies.

The best conditions offered by suppliers will always belong to the silicon they design themselves, which is the true reason behind Anthropic’s decision to pursue self-developed chips.

Financial Growth and Challenges

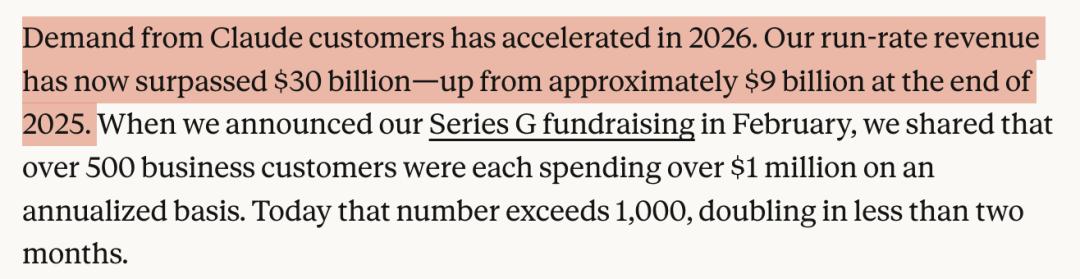

Indeed, Anthropic’s growth curve over the past two years has been remarkable. According to the latest disclosures, their annual revenue has surpassed $30 billion, more than tripling from approximately $9 billion at the end of 2025.

Even more impressive is their market share among enterprises. Recent data shows that 73% of spending on AI tools by enterprises goes to Anthropic, while competitors like OpenAI have dropped to around 27%.

More than 1,000 enterprise clients have annual payments exceeding $1 million, and this figure has doubled in less than two months.

However, rapid growth comes with its own challenges. Products like Claude Code and Claude Cowork are significant power consumers, capable of running tasks continuously for hours, with each response consuming GPU resources.

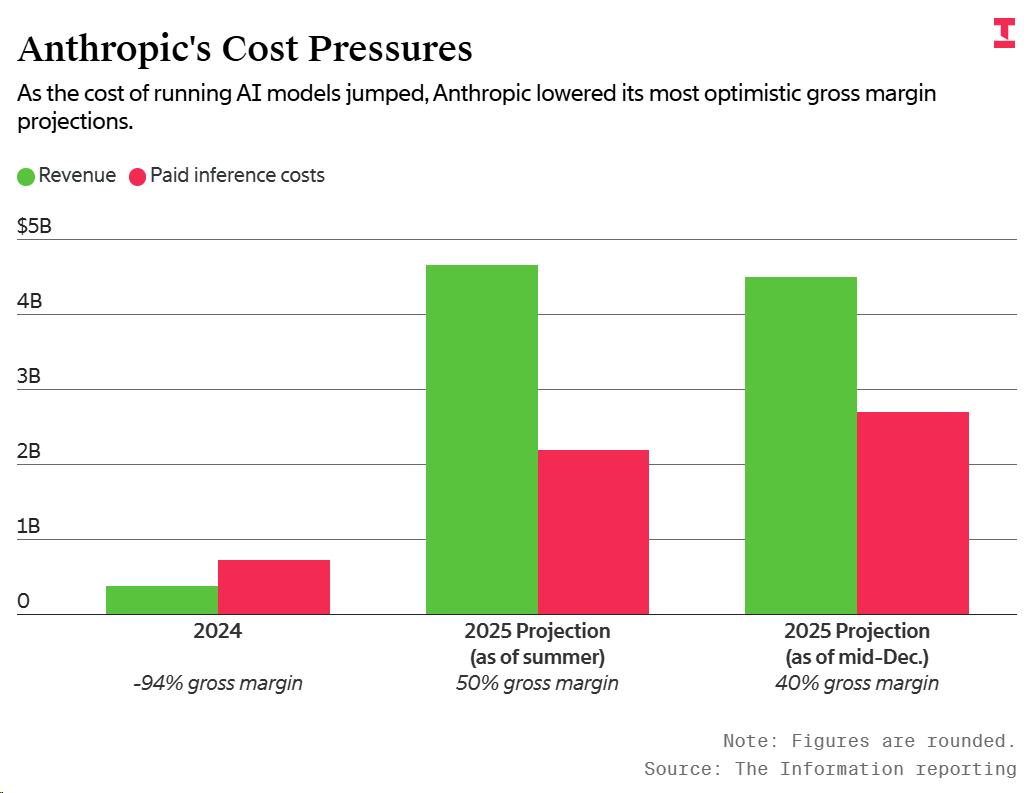

Anthropic’s gross margin for 2025 has been projected to fall below expectations due to rising costs, which is no secret in the industry. To address this financial pressure, Anthropic has implemented three recent strategies:

- Revised Enterprise Pricing: Anthropic quietly changed the Claude Enterprise model from a pure subscription to a “$20 monthly fee + pay-per-use” model. Previously, enterprise clients could pay up to $200 per month per user, with a certain quota of discounted tokens included. The new model significantly reduces fixed costs but charges users based on actual token usage (not affecting small companies with fewer than 150 users).

Estimates suggest that heavy users’ costs could double or even triple.

- Added Restrictions for Claude Code Users: Users who subscribed to Claude Code must pay additional fees to use third-party agent tools like OpenClaw. According to the company, computational power is a resource that must be carefully allocated, prioritizing customers using their own products and APIs.

- Mandatory Real-Name Verification: This measure is particularly detrimental to domestic users. Anthropic’s announcement explicitly states that “creating accounts from unsupported regions” is one reason for account suspension, and KYC requires government-issued ID and real-time selfies.

Domestic accounts using Claude through proxies or shared pools are unlikely to pass this verification process, leading to the loss of conversation history, prompts, and project context upon account suspension.

Conclusion

These three measures apply pressure on the demand side, pushing out excessive users. However, no matter how much pressure is applied on the demand side, the supply side’s ceiling remains.

Sudip Roy, co-founder of Adaption Labs and former head of inference at Cohere, succinctly captured the predicament of subscription-based AI products: “If you adopt a subscription model, you’re essentially betting that users won’t utilize their full quota. If you lose that bet, you have to build your own tools.”

Looking Ahead to 2027

Anthropic’s situation is indeed awkward. With a valuation of $380 billion and 70% of enterprise first orders directed towards Claude, all these numbers ultimately hinge on one solid factor: chips.

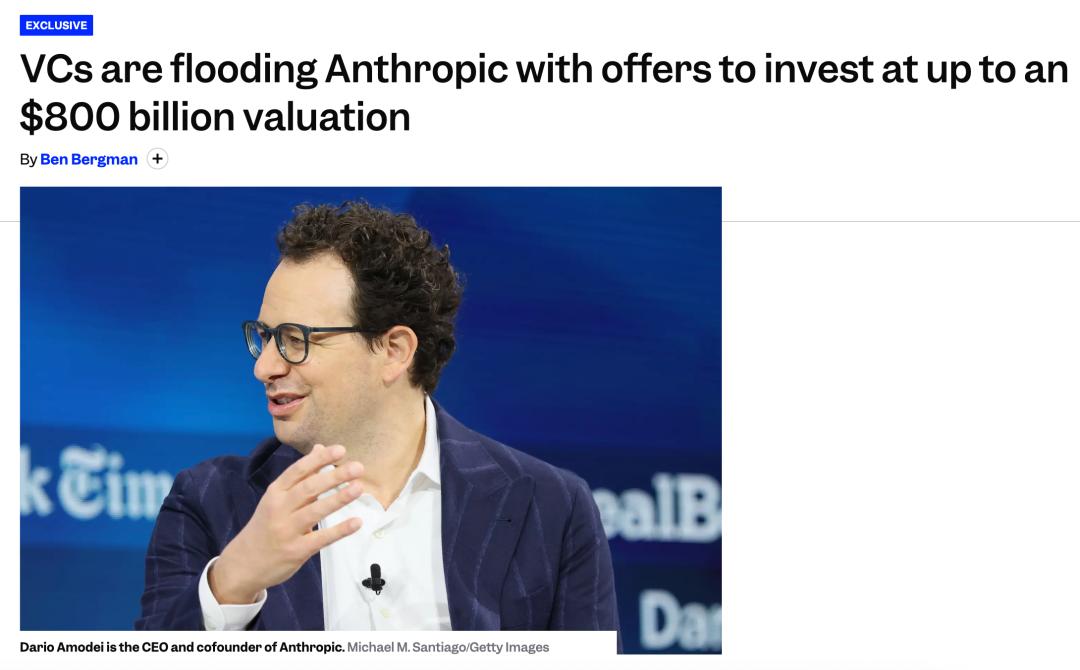

However, a plethora of venture capitalists are eager to invest in Anthropic, with estimates suggesting the next round could reach an $800 billion valuation. Yet, the power dynamics regarding chips remain in the hands of others.

Purchasing NVIDIA chips requires navigating Huang’s decisions, acquiring TPUs means competing with Google for scheduling, and even Broadcom is starting to write betting clauses.

Self-development is the only way to regain control over their destiny, but this path will take until after 2027 to bear fruit. Until then, every outage of Claude and every developer complaint on Downdetector serves as a reminder of the same issue: while the narrative is grand, the chips needed to create that narrative still depend on others.

Comments

Discussion is powered by Giscus (GitHub Discussions). Add

repo,repoID,category, andcategoryIDunder[params.comments.giscus]inhugo.tomlusing the values from the Giscus setup tool.