Introduction

Last night, Anthropic released the preview version of Claude Mythos, which has caused a stir in the AI community.

Claude Mythos is touted as “the most powerful AI model to date,” representing a new level of capability that significantly surpasses its predecessor, Claude Opus 4.6.

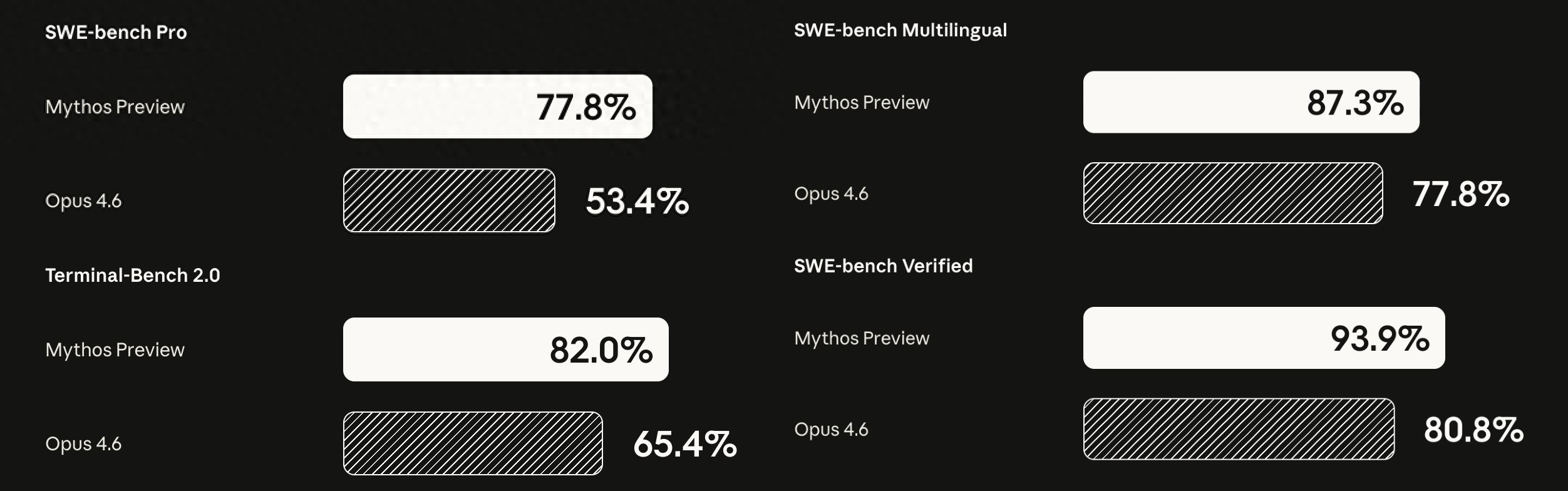

Based on current data and results, this is not just marketing hype but a genuine qualitative leap. In nearly all public benchmark tests, Claude Mythos has taken the lead, with impressive improvements:

- For software engineering, SWE-bench Verified scores jumped from 80.8% for Opus 4.6 to 93.9%, and SWE-bench Pro scores increased from 53.4% to 77.8%.

- In high-difficulty mathematical reasoning, the USAMO 2026 score soared from 42.3% to 97.6%—almost a perfect score.

It can be said to be the strongest model on Earth currently.

These are just a few “small” examples. More impressively, in recent weeks, Mythos has autonomously discovered thousands of high-risk zero-day vulnerabilities across major operating systems and browsers, including the Linux kernel, OpenBSD, Firefox, and FFmpeg.

Many vulnerabilities had gone undetected by human security teams for decades. For instance, in the security-focused OpenBSD, Mythos found a remote crash vulnerability that had been hidden for 27 years. Anthropic confidently states that Mythos surpasses any other AI model in cybersecurity capabilities.

This is not just a “better Claude”; it writes code, performs reasoning, and handles security with unprecedented autonomy and depth. Developers had hoped for a “complete liberation of productivity,” but the outcome is:

Anthropic has shut the door.

Currently, the Claude Mythos preview is not available to the public. According to the official statement, the Mythos preview is only used for “defensive cybersecurity” and is accessible only to 12 partners (including AWS, Apple, Broadcom, Cisco, CrowdStrike, Google, JPMorgan, the Linux Foundation, Microsoft, NVIDIA, and Palo Alto Networks) and over 40 organizations that build or maintain critical software infrastructure.

This is part of Anthropic’s Project Glasswing. Anthropic has even allocated $100 million to support over 40 additional organizations in using the Mythos preview to maintain the foundation of the open-source ecosystem.

But why is such a “powerful model” being kept under wraps?

Too Powerful to Release

First, it is clear that the Claude Mythos preview, or similarly powerful supermodels, will eventually be made available to the public. Anthropic has stated explicitly:

“While we currently have no plans to open the Claude Mythos preview to the public, our ultimate goal is to enable users to safely deploy Mythos-level models at scale—not just for cybersecurity, but for the countless other benefits these powerful models will bring.”

As the official blog suggests, this model is “too dangerous.”

Last year, the Google Threat Intelligence Group (GTIG) discovered two real samples, PromptFlux and PromptSteal, which could dynamically generate malicious scripts while connecting to commercial models (like the Gemini API) at runtime, obfuscating their own code in real-time, and creating new functionalities based on the target environment, completely bypassing traditional signature detection.

This is not an isolated case. According to market research firm SQmagazine, the number of reported AI-driven cyberattacks globally has increased by 47%, with over 28 million incidents expected.

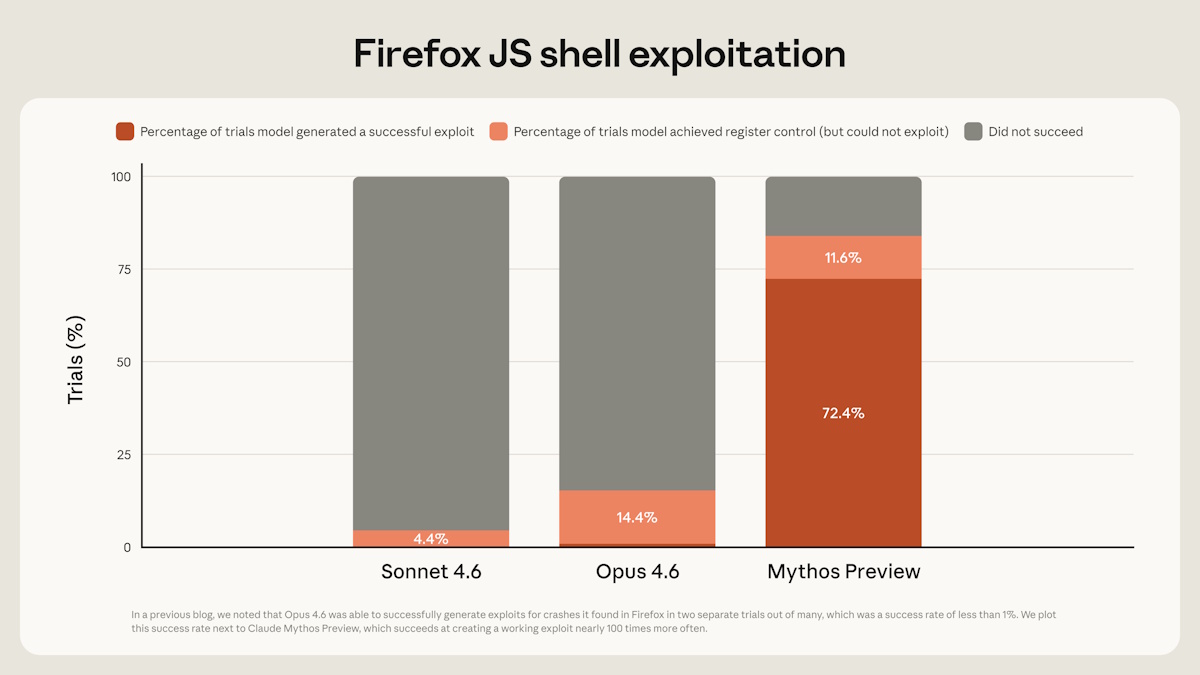

Returning to the Mythos preview, its ability to find vulnerabilities is already apparent. In contrast to the previous strongest model, Opus 4.6, which had a near-zero success rate in autonomously discovering and exploiting vulnerabilities, Mythos’s performance is astonishing.

For example, in testing for a vulnerability found in the Mozilla Firefox 147 JavaScript engine (now patched), Claude Opus 4.6 attempted to exploit the vulnerability hundreds of times but succeeded only twice; in the same test, Claude Mythos successfully exploited the vulnerability 181 times.

Additionally, reports from recent internal red team tests indicate that Mythos’s offensive capabilities have already surpassed those of top human security experts. It does not just “find vulnerabilities”; it can autonomously discover and exploit thousands of high-risk zero-day vulnerabilities in a chain.

As is well known, hackers can be divided into white hats and black hats. White hat hackers typically notify project managers of security vulnerabilities and may even proactively patch them in open-source projects. In contrast, black hat hackers may exploit these vulnerabilities to attack systems.

Mythos can both attack and defend, but its offensive potential is concerning. If it falls into the wrong hands, it could instantly empower AI-level attack chains. Anthropic itself states that this is not an ordinary cutting-edge model; its general capabilities are strong enough to elevate cyber warfare to a new dimension.

The ongoing arms race in computer security has always been a matter of “the devil is in the details”. Over the past two years, the security arms race surrounding AI large models has been a focus for the industry, especially among major companies. For instance, domestic companies like ByteDance and Ant Group have hosted similar AI large model attack-defense competitions to discover and address security challenges in the AI era.

However, Anthropic also points out that in the long run, powerful language models like Mythos will be more beneficial for the “blue team” in defense. But in the short term, if Mythos were made publicly available, it would quickly be exploited by attackers to launch unprecedentedly efficient attacks on the global network. The key issue is that defensive actions are more passive, while offensive actions are proactive. Considering the incentives, attackers are more motivated to use models like Mythos.

Thus, to ensure a “smooth transition,” Anthropic has launched the “Glasswing Project.”

Interestingly, the project name is inspired by a widely distributed butterfly species in the Americas, known for its transparent wings, which, despite appearing fragile, can support up to 40 times their body weight.

The logic behind the “Glasswing Project” is straightforward: to equip defenders with the tools first, allowing them to patch vulnerabilities before attackers gain access to similar-level AI.

From this perspective, it is indeed wise not to release the strongest Claude model to the public. Moreover, even from the standpoint of ordinary Claude users, the temporary non-release of the Claude Mythos preview is more beneficial than harmful.

Not Releasing the Strongest Model Makes Claude More Usable

Many people reacted with disappointment to the news that the Mythos preview is not available: why not let everyone use such a powerful model?

However, if you are an ordinary Claude user or a developer relying on Claude Code for coding and project work, you might find a somewhat counterintuitive fact: the temporary non-release of Mythos is actually more beneficial for us.

Let’s first address a recent pain point that many have felt.

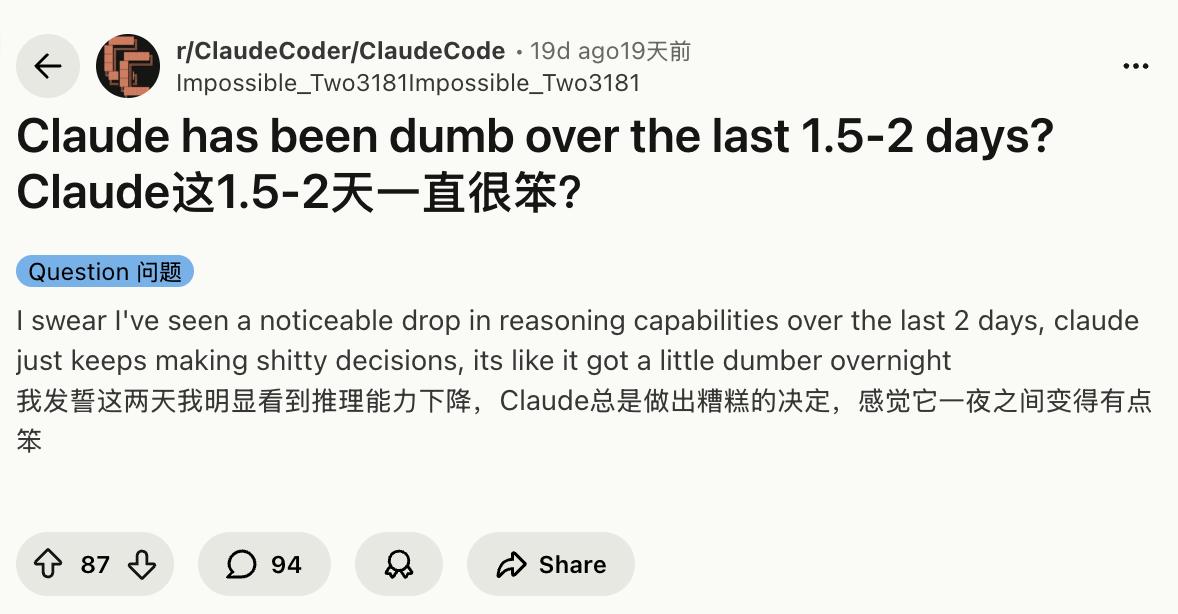

Since February of this year, Claude and Claude Code have experienced a “dramatic performance degradation.” Posts related to this topic have flooded Reddit’s r/ClaudeCode and r/ClaudeAI, with some users posting titles like “4.6 Regression is real!” and others complaining, “Claude Code has been dumb over the last 1.5-2 days.”

Some developers tracked data showing that file read counts dropped from 6-7 times to just 2, and the model has become increasingly “lazy” in complex tasks, often opting for edit-first approaches instead of conducting prior research.

AMD AI Director Stella Laurenzo even publicly stated that Claude Code has become “dumber and lazier,” making it unreliable for complex engineering tasks.

Boris, a member of the Claude Code team, acknowledged on Hacker News that some agentic use cases have experienced regression, attributing the core changes to the introduction of “redact-thinking” and Adaptive Thinking in February, which allowed the model to decide how long to think, resulting in a roughly 67% decrease in depth for complex tasks.

Similar sentiments have been echoed on X, with developers complaining that Claude Code has devolved into an “intern” that requires constant supervision.

Why has this happened?

The training dynamics of ultra-large parameter models dictate that whenever major companies sprint towards the next generation of the “strongest model,” they require massive computational power. Before the release of Gemini 3.0/3.1, the 2.5 Pro faced multiple complaints from developers about becoming less capable after silent updates, with issues like forgetting long context and increased failure rates in logical tasks. Similar situations occurred before the launch of GPT-5, where users reported shorter outputs, laziness, and mechanical responses to complex instructions.

Computational resources are limited; training a new level model like Mythos is extremely costly. This can only be done by “squeezing” resources from current models through dynamic load balancing, adaptive efforts, and even slight optimizations, which results in the perceived “dumber and lazier” performance.

Additionally, the user base for Claude Code has grown far beyond expectations, causing infrastructure strain, while the training and testing of the Mythos preview (internally referred to as Capybara) must prioritize top-tier GPUs. Thus, when the Mythos preview was released but not made available to the public, there is no need to worry about further dilution of computational resources that could lead to a decline in the quality of Claude or Claude Code.

For ordinary Claude users, the experience will be more stable.

On the other hand, Anthropic is using Mythos in the “Glasswing Project” to help major companies and open-source projects fix vulnerabilities. Once these vulnerabilities are patched, it will ultimately benefit all users indirectly.

When Anthropic is better prepared to control risks and infrastructure, and can safely deploy Mythos-level models at scale, ordinary users will receive a truly stable, powerful experience that won’t degrade every few days, rather than rushing to release it now and subjecting everyone to the pain of resource strain.

Conclusion

The emergence of the Claude Mythos preview has highlighted a harsh yet realistic issue: the more powerful AI becomes, the more real the risks.

When the offensive capabilities of the strongest model exceed the current defense systems, Anthropic’s choice to restrict access is not conservatism but a way to buy time for the entire industry, allowing defenders to fortify their foundations and enabling ordinary users to maintain a relatively stable Claude experience, rather than being caught up in the chaos of resource strain and security loss.

For most, this may be the best arrangement at present.

Comments

Discussion is powered by Giscus (GitHub Discussions). Add

repo,repoID,category, andcategoryIDunder[params.comments.giscus]inhugo.tomlusing the values from the Giscus setup tool.