Claude’s Decline: A Strategic Shift or a Downfall?

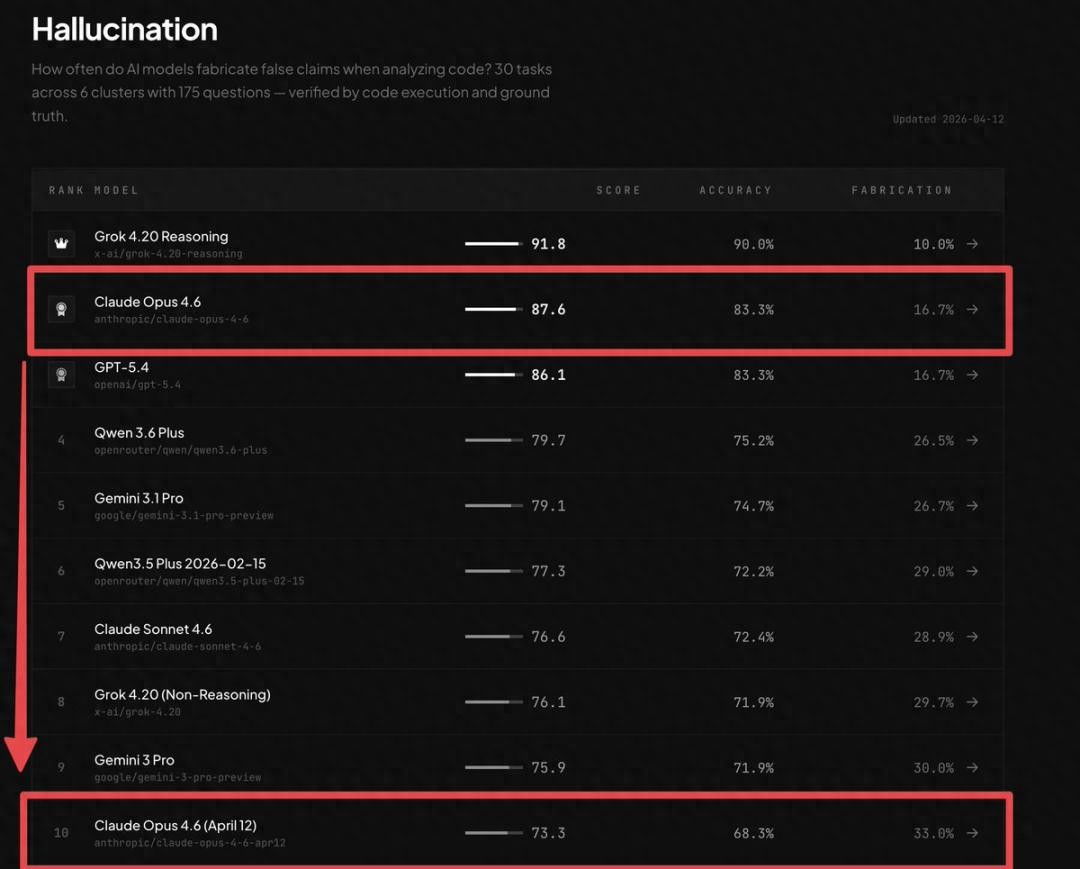

Recently, AMD’s AI director confirmed that Claude has become less capable, stating it is now unusable for complex tasks. The latest BridgeBench report has further highlighted this decline, showing Claude Opus 4.6’s global ranking plummeting from 2nd to 10th place. Its accuracy dropped sharply from 83.3% to 68.3%, with the hallucination rate nearly doubling, increasing by 98%.

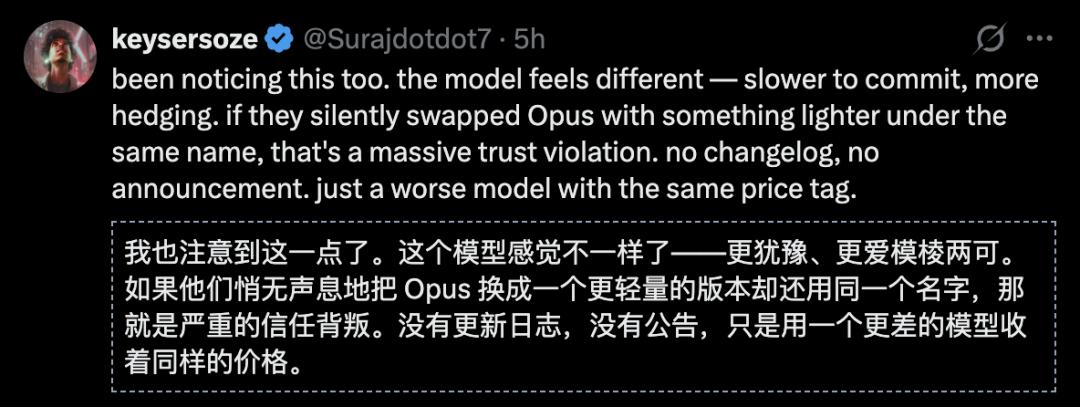

Users are feeling deceived by Claude’s performance drop. Imagine relying on this model for critical tasks, only to find out it has been replaced with a significantly inferior version without notification.

This has led to widespread skepticism about the legality of such changes, and trust in Anthropic is waning, even among its most loyal supporters. However, amidst the criticism, Anthropic revealed a potential game-changer: leaked screenshots of an internal tool interface.

The leaked image shows that Claude Projects is testing a comprehensive full-stack application development system. This isn’t just about helping you write code; it’s about helping you create products.

Users are arguing over model scores while Anthropic is shifting its strategy.

What’s Hidden in the Leaked Image?

The leaked screenshot displays a one-click development kit currently being tested by Claude Projects. It features a range of pre-set templates for AI chatbots, interactive games, business landing pages, and SaaS dashboards, covering the most frequent needs of independent developers.

However, the templates are just the surface. The real shock lies in the full-stack capabilities behind them:

- Authentication? Select and configure.

- Database? Choose and build.

- Frontend interface? Describe and generate.

- Deployment? One-click completion.

This is not just ‘AI-assisted programming’. This is ‘AI replacing programming’, eliminating the need for your skills.

To understand this statement, we need to recognize the current landscape of AI programming tools:

- Cursor aims to make programmers faster in their IDEs, optimizing coding speed while keeping programmers in the lead.

- Replit allows non-programmers to code, lowering the entry barrier but still requiring an understanding of code logic.

- Vercel makes deployment seamless, addressing the final mile while requiring users to navigate the earlier stages themselves.

Each tool targets a specific part of the software development chain, excelling in its domain. However, Claude’s ambition is on a different level entirely.

Cursor makes programmers ten times faster, Replit enables non-programmers to code, but Claude aims to make ‘coding’ itself obsolete.

The former represents an efficiency revolution, while the latter signifies the extinction of a category.

According to leaked information, the underlying engine powering this system is Opus 4.6—the very model that has been ridiculed for its decline.

Is Mythos’s ‘Weakness’ Intentional?

The most critical and controversial judgment may be that Anthropic might not care about Mythos’s ranking.

This may sound like an excuse for a failure, but let’s do the math. When your strategic goal is to become a ‘full-stack application platform’, the role of the model changes fundamentally. It no longer needs to be ’the smartest’; it just needs to be ‘good enough.’

In platform competition, the decisive factor has never been the horsepower of the underlying engine but the depth of the upper ecosystem’s stickiness. Windows won over Mac not because its operating system was more elegant, but because its software ecosystem was richer. Android crushed Windows Phone not due to a more advanced kernel, but because it had more developers.

In platform wars, ‘best’ is never the reason for victory; ‘most widely used’ is.

Dario Amodei has repeatedly stated in public, ‘Coding will die.’ The leak of the full-stack builder provides tangible evidence for this statement. Dario is not making a prophecy; he is executing a roadmap.

If this reasoning holds, then Mythos leading GPT-5.4 Pro on HLE (without tools 56.8 vs 42.7) but being tied on GPQA (94.4 vs 94.5) and surpassed on BrowseComp (89.3 vs 86.9) takes on a completely different meaning.

It’s not that ‘Anthropic lost’; rather, ‘Anthropic selectively chose not to focus here.’

Should it invest limited computational resources into maintaining a fictional ’number one’ label or direct those resources towards a full-stack builder capable of creating commercial value?

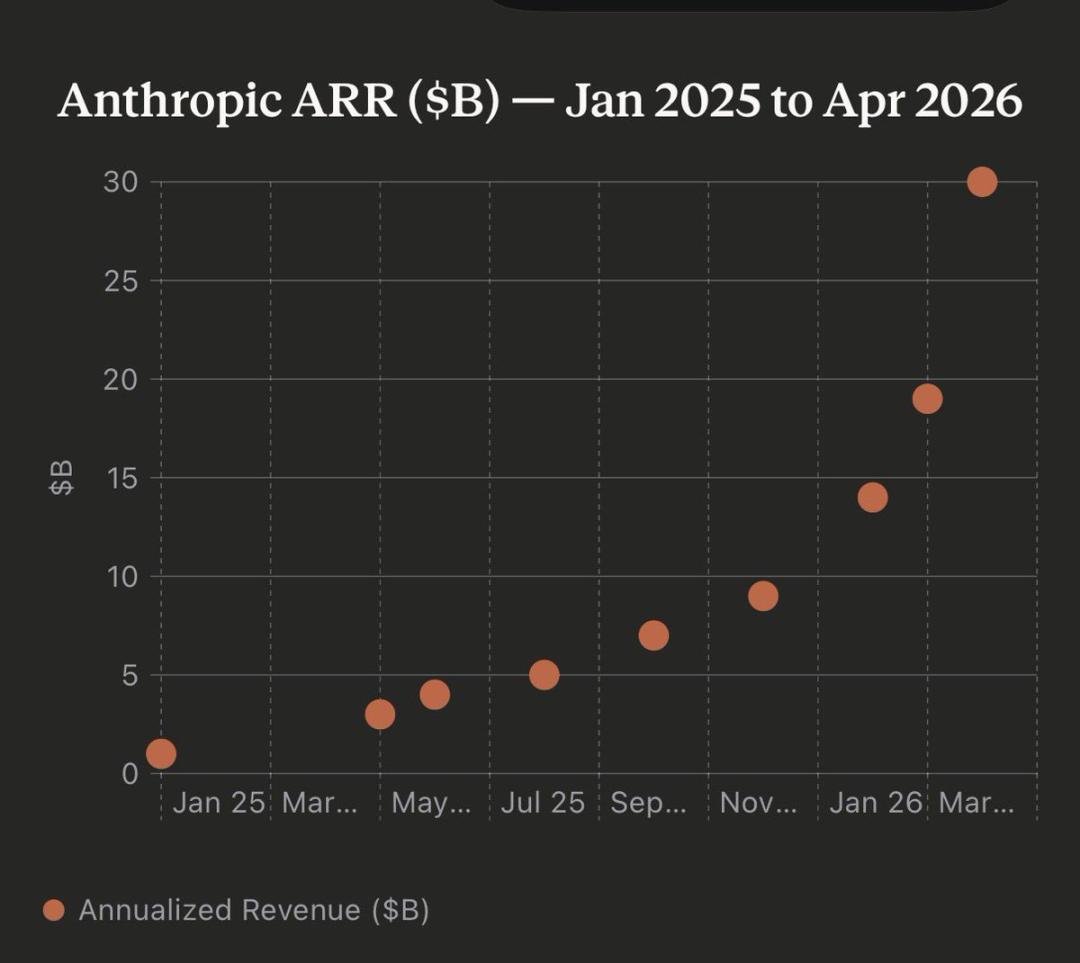

For a company with an annual revenue of $30 billion that needs to prove its commercialization ability to investors, this choice is not difficult. As long as the model is adequate, platform lock-in is the real moat.

The harsh truth of business competition is that users do not care if your GPQA score is 94.4 or 94.5; they care about whether ‘I can run the app with just one command.’

The Fear After Reaching $30 Billion

Anthropic’s annual revenue has just surpassed $30 billion, exceeding OpenAI.

In just 15 months, Anthropic’s annual revenue grew from $1 billion to $30 billion. This is a figure that would make any startup pop champagne.

However, if you are Dario Amodei, your biggest emotion at this moment is not celebration but fear. Most of this $30 billion comes from API calls, which is essentially a highly dangerous business model.

Why? Because APIs mean your customers are using your capabilities to build their own products. Today they might use Claude’s interface to create an AI customer service platform, tomorrow an AI writing tool, and the day after an AI programming assistant.

Every successful customer builds their own skyscraper on your foundation. It sounds great—until one day, another model company offers a cheaper, similarly effective API, and your customers migrate en masse overnight.

This is the nightmare of ‘model commoditization’: as the differences between models diminish, API pricing becomes a winner-less price war.

OpenAI feels this fear, which is why it is aggressively developing consumer products—ChatGPT, GPTs, custom assistants. Google feels this fear too, hence it integrates Gemini into every one of its products.

They are all doing the same thing: before models become cheap commodities, they are building platforms that users cannot live without.

Anthropic’s full-stack builder is the most radical version of this logic. Its underlying message is:

Rather than wait for others to build a platform using my API and then risk being kicked out when models drop in price, I should build the platform myself.

You won’t need to call my API anymore; you can directly create apps on my platform. Your user data, your workflows, your deployment environment—all reside with me. When that day comes, if you want to switch models, you can, but your entire business will have to start over.

This is not product innovation; this is survival instinct.

The $30 billion revenue proves Anthropic can make money, but the leaked image reveals its true anxiety—making money is not enough; it must ensure others cannot leave.

Conclusion: Stars and Illusions

Stepping back from the business narrative, let’s return to the technical judgment.

Currently, the strongest large models—whether Claude, GPT, or Gemini—are approximately at 70% capability. The rate of increase in this number has visibly slowed over the past six months.

To move from 70% to 100%, it’s not about rankings or gaining a few more GPQA points. It’s about becoming an irreplaceable infrastructure—like the power grid; you don’t care what turbines the power plant uses; you just know the lights turn on when you flip the switch, and the air conditioning cools when you turn it on.

Anthropic’s full-stack builder is the first to show an AI company seriously considering this path of ‘infrastructuralization’.

No longer fixated on the vanity war of ‘my model is 0.1 points smarter than yours,’ it directly addresses a more fundamental question: how to ensure a billion people unknowingly use my products every day?

Because the true determinant of AI’s future has never been whose test scores are higher, but who becomes that indispensable infrastructure.

Comments

Discussion is powered by Giscus (GitHub Discussions). Add

repo,repoID,category, andcategoryIDunder[params.comments.giscus]inhugo.tomlusing the values from the Giscus setup tool.